The S2CT Dynamic, Secure, Peer-to-Peer, Private Data Sharing Network with Blockchain

As early as mid 2006, S2CT principals realized supply chain companies and stakeholders already record and save all the relevant data regarding products they ship, transport, store, and ultimately deliver to end customers, in their private company databases. This data uniquely identifies cargo, when and how it was shipped, what were the special handling arrangements, when did it arrive at a shipping depot, how was it consolidated and shipped with other cargo, when did it leave the depot, when did it arrive at the shipping terminal, etc., etc. Data at each change of custody was in a database somewhere, put there by whatever technology the particular company found appropriate for its business without being encumbered by technology compatibility with others. The problem was how to allow each of these companies to safely and securely share this private data, without risk of compromising its privacy, with other companies when there was a clear business benefit.

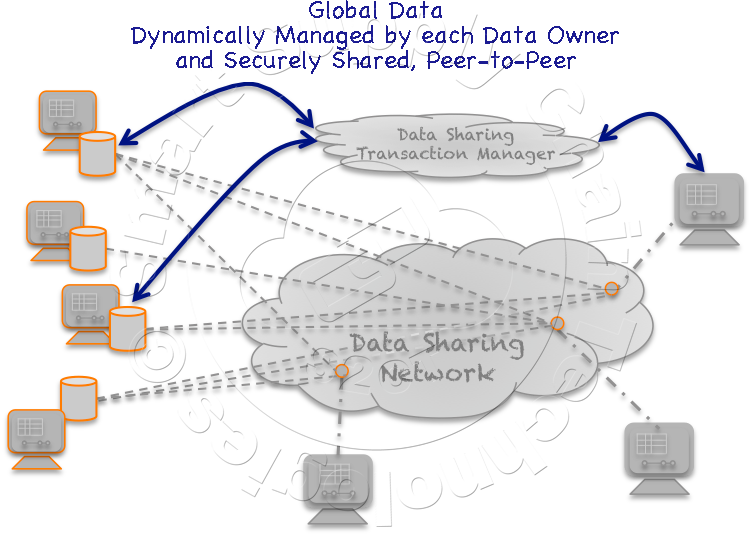

S2CT principals have been thinking about and developing components of a distributed private data sharing technology ever since they theorized an architecture that in many ways is similar to what the industry today refers to as Blockchain. This original concept, as it still does today, was able to track and monitor "things" across a far-flung landscape of distributed private databases, across a Blockchain-of-Custody. Originally the goal was to track and monitor things as they moved through their supply chain journeys and chain-of-custody without relying on a central data repository or specific electronic hardware attached to them. In its simplest interpretation, Blockchain is a distributed ledger that securely records transactions between two parties. The transfer of something, bitcoins or cargo for example, from one party to another party, is securely recorded in each party's private ledger. Each record representing a block, and a number of blocks strung together, a Blockchain. Without pressing the analogy too deeply, S2CT sees and uses its Exchange Verification (EV) as a form of Blockchain, in this case, a Blockchain-of-Custody for cargo. When a cargo arrives at a destination, the carrier and the receiving party execute an Exchange Verification.  The carrier reports what it has delivered, along with data associated with its condition if applicable, to the carrier's Asset Management System, and the receiver reports what it has received along with the same condition data to its Asset Management System. A cloud application compares the data and if it agrees, an EV entry is made in both Asset Management System databases. These two disjoint entries, in different private databases, represent a successful change in custody and a block in the Blockchain. We have evolved these original concepts and the underlying technologies over the years into what we now refer to simply as the S2CT Data Sharing Network.

The carrier reports what it has delivered, along with data associated with its condition if applicable, to the carrier's Asset Management System, and the receiver reports what it has received along with the same condition data to its Asset Management System. A cloud application compares the data and if it agrees, an EV entry is made in both Asset Management System databases. These two disjoint entries, in different private databases, represent a successful change in custody and a block in the Blockchain. We have evolved these original concepts and the underlying technologies over the years into what we now refer to simply as the S2CT Data Sharing Network.

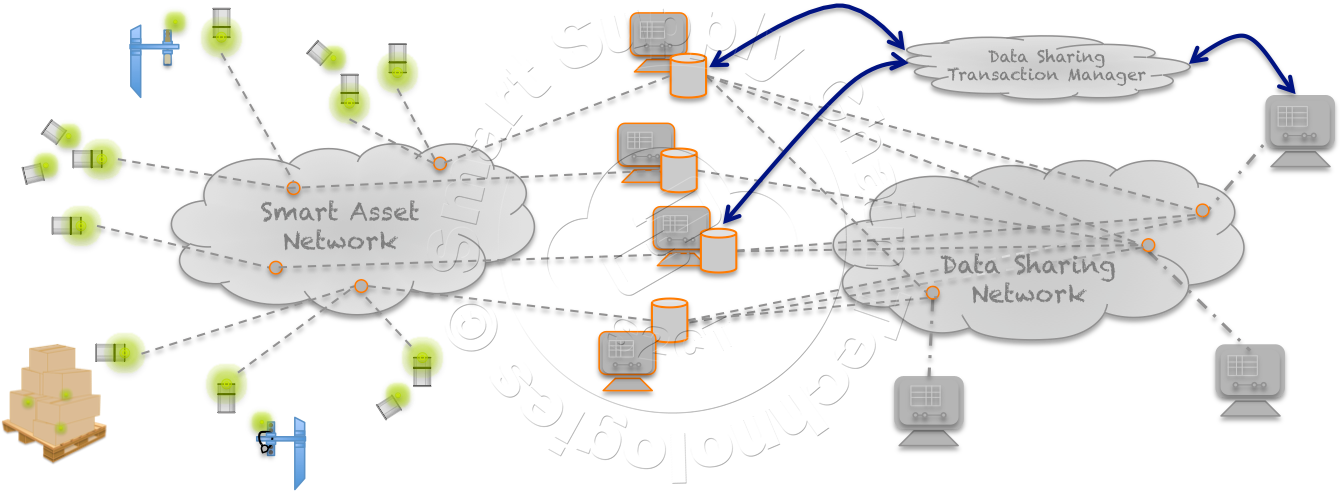

The S2CT Data Sharing Network fundamentally connects Data Sharing Databases of different companies together in a secure peer-to-peer connection, for the purpose of sharing data between them, when and while their interest intersects. S2CT's Secure Peer-to-Peer Data Sharing Network Technology is applicable to a wide range of secure data sharing Commerce 4.0 applications including supply chain asset management. In a supply chain application, companies are sharing relevant data from their underlying Asset Management Systems to track and monitor assets, cargo, equipment, containers, vehicles, etc., as they move through the supply chain and their chain-of-custody. A comprehensive description of a supply chain journey, including the use of the Data Sharing Network, is extensively presented in the S2CT's White Paper entitled "IBM Cloud, Blockchain, S2CT's Global Asset Management Architecture and Ubiquitous Wi-Fi Render GPS and Digital Cellular Networks Communications Obsolete in the Global Supply Chain" available on the S2CT website.

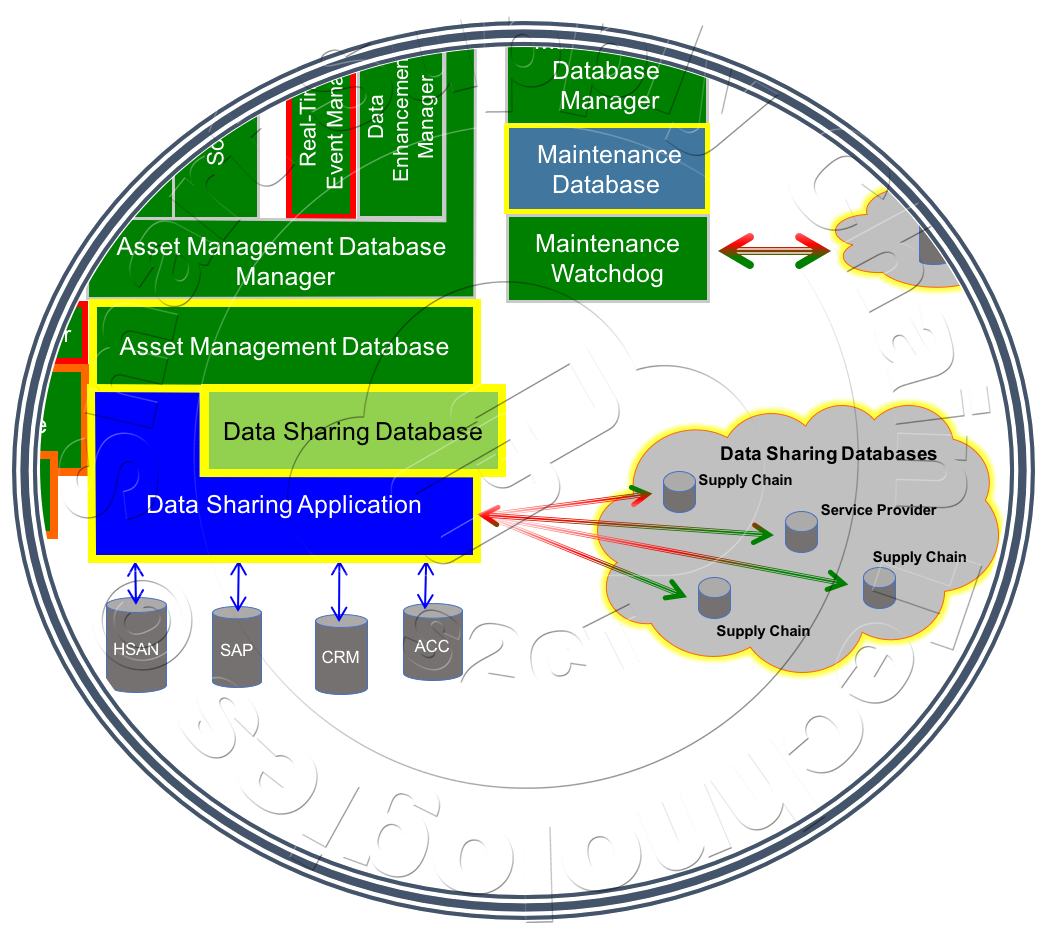

Structurally, each company's Data Sharing Network is composed of two elements,  a "Registered" Data Sharing Application (DSApp) and a Data Sharing Database (DSDb), typically hosted on the same server as the company's Asset Management System. The DSApp provides the mechanism for the Data Sharing Network's registration and administration as well as managing the networks data and communications security. The DSDb provides a common data interface type between Data Sharing Networks and for the DSApp to use with local databases such as the Asset Management Database and various legacy databases that it might exchange data with.

a "Registered" Data Sharing Application (DSApp) and a Data Sharing Database (DSDb), typically hosted on the same server as the company's Asset Management System. The DSApp provides the mechanism for the Data Sharing Network's registration and administration as well as managing the networks data and communications security. The DSDb provides a common data interface type between Data Sharing Networks and for the DSApp to use with local databases such as the Asset Management Database and various legacy databases that it might exchange data with.

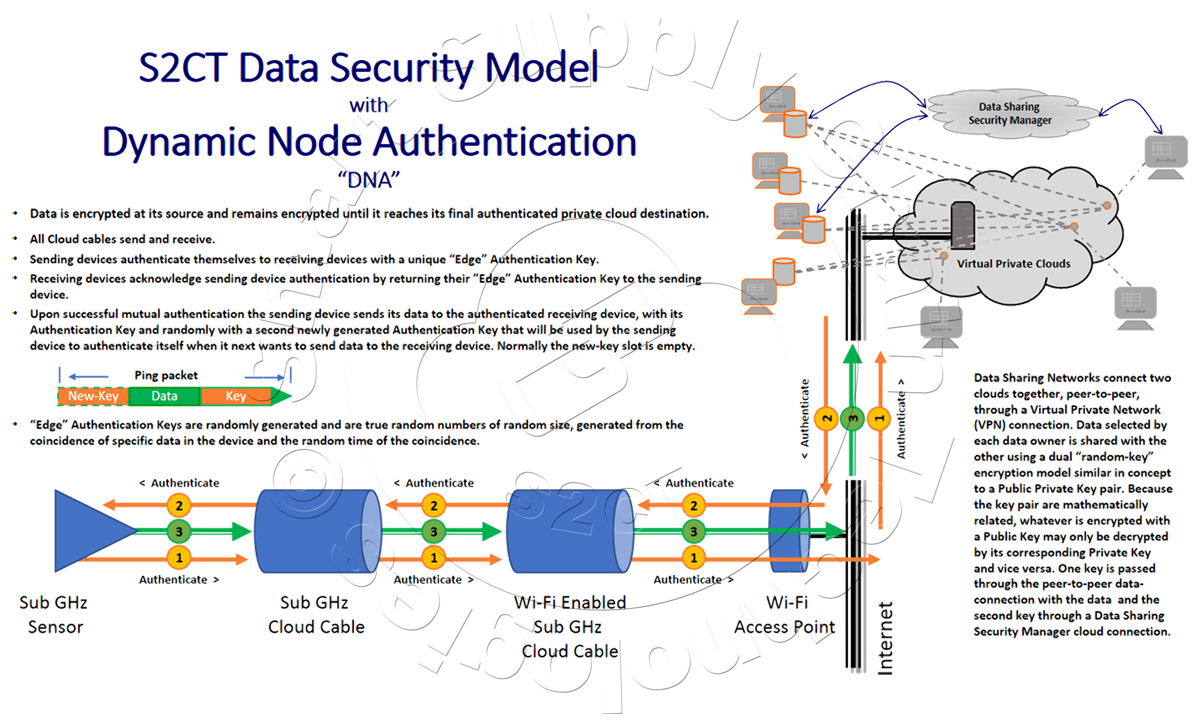

Registration in the S2CT Data Sharing Network Cloud and the Data Sharing Network technology is free, Data Sharing Network users will pay a small micro-transaction fee for requesting data but will also be able to share their data for free. Registering a Data Sharing Network provides it with a unique ID used when communicating with other Data Sharing Networks. Registration is when and where the Data Sharing Network's security begins. Registration generates the network's initial Dynamic Node Authentication key and its PKI key pair used to encrypt and decrypt data being communicated between Data Sharing Networks.

When two Data Sharing Networks connect together in a peer-to-peer connection, they do so through a Virtual Private Network (VPN)(1). The VPN connection is only established between two authenticated registered DSApp nodes in much the same way that two devices authenticate themselves and exchange data in the S2CT Data Security Model, with Dynamic Node Authentication.  A comprehensive description of the S2CT Data Security model, including Dynamic Node Authentication, is presented in the S2CT's White Paper entitled "Data Security in the Global Supply Chain" available on the S2CT website. Once authenticated and the connection is established, each node in the Data Sharing Network connection sends its Public Encryption Key to the other node. Data is shared between the nodes using a dual "random-key" encryption scheme, similar in concept to a Public / Private Key method, PPK(2). In this asymmetric key encryption method, anyone can encrypt messages using the public key, but only the holder of the private key can decrypt the message because the keys in the pair are mathematically related. Said more simply, whatever is encrypted with a Public Key may only be decrypted using the paired Private Key. Data sent from one Data Sharing Network node to another is encrypted with the receiving node's Public Key. The receiving node decrypts the data with its Private Key, kept and managed by the S2CT Data Sharing Network Cloud, effectively acting as the "Certificate" provider. Both keys are randomly regenerated and redistributed.

A comprehensive description of the S2CT Data Security model, including Dynamic Node Authentication, is presented in the S2CT's White Paper entitled "Data Security in the Global Supply Chain" available on the S2CT website. Once authenticated and the connection is established, each node in the Data Sharing Network connection sends its Public Encryption Key to the other node. Data is shared between the nodes using a dual "random-key" encryption scheme, similar in concept to a Public / Private Key method, PPK(2). In this asymmetric key encryption method, anyone can encrypt messages using the public key, but only the holder of the private key can decrypt the message because the keys in the pair are mathematically related. Said more simply, whatever is encrypted with a Public Key may only be decrypted using the paired Private Key. Data sent from one Data Sharing Network node to another is encrypted with the receiving node's Public Key. The receiving node decrypts the data with its Private Key, kept and managed by the S2CT Data Sharing Network Cloud, effectively acting as the "Certificate" provider. Both keys are randomly regenerated and redistributed.

The DSApp manages all data written into and retrieved from its DSDb. The DSApp manages requests to and from other Data Sharing Networks for data, and the Artificial Intelligence(3) (AI) Rules for managing impromptu dynamic connections. Through the DSApp, the Data Sharing Network Administrator connects the DSDb to the company's Asset Management Database and any other databases available from the server that might provide pertinent data, like order entry, shipping, receiving, finance, etc., for asset management. The Administrator sets up the data sharing rules for the DSApp, like share Basic Data with any authorized and authenticated Data Sharing Network, identify "Trusted" Data Sharing Network requesters for sharing various levels of extended data, etc., for example.

AI plays an important role in S2CT Data Sharing Network as it has in many of the concepts developed and rolled out by S2CT principals over the years. Five plus years of battery life for an electronic communications device wasn't the result of break-through battery technology but rather the device itself intelligently deciding when to use the battery's energy and when not to. The device might decide to terminate a Wide-Area-Network connection attempt after observing that the attempt was struggling, noting that the probability of its success was less than optimal, that the last connection was only hours ago, knowing its data would not be lost and reasoning that the probably of making a connection more easily within the next few hours was high.

AI is what makes S2CT Data Sharing Network so powerful. You can simply think of Artificial Intelligence as the difference between software using a table with thousands of entries to determine every step that it takes and a table with just a few key parameters in it that are used over and over again by the software to make decisions around the ever-changing data and circumstances. AI in the Data Sharing Application is used to determine what data sharing connections to make and when to terminate them, what authorized data to share with which supply chain partner based on reasoned benefits, is the EV successful or not and what to do about it if it isn't. Sounds simple but in many cases, it's not!

When the EV Blockchain-of custody is not digitally shared it's the DSApp's AI that non-heuristically finds the correct Data Sharing Network to connect with based on reasoning. The DSApp can dynamically decide to share cargo-specific historical temperature data to a receiving supply chain partner to facilitate a corrective action that might prevent the cargo from deteriorating. When the EV requests from both the delivering party and the receiving party reports slightly different data, temperature from two different sensors for example, AI can either reject the EV and issue a warning to be resolved by personnel or conclude that although different, the reported temperatures are essentially equivalent. Of course, the level of freedom "given" to the DSApp's AI is completely up to its user, Administrator, and will very likely evolve over time as trust builds.

Operationally, the S2CT Data Sharing Network model is built around the notion that each data sharing connection operates  independently as separate peer-to-peer connections. These independent connections are managed by each network's DSApp to send and receive data, directly, from and to their respective DSDb's. The source data, that is being shared, is retrieved by the DSApp from "local" databases like the Asset Management Database and other local databases that hold data relevant to the specific data sharing connection. Relevant data is any data that the source data provider believes will benefit its interest. The source data provider's supply chain management might be enhanced by this specific receiving party knowing the next destination of a vehicle delivering a cargo. That data retrieved by the DSApp from the source provider's shipping database is copied into the DSDb so that all Data Sharing communications is in a common format and protocol. Each DSApp manages the complexity of interfacing to disparate database types and formats, depending on ODBC(4) and JDBC(5) to ease those rigors. All of this is setup and controlled explicitly or through dynamic AI rules by the Data Sharing Network Administrator.

independently as separate peer-to-peer connections. These independent connections are managed by each network's DSApp to send and receive data, directly, from and to their respective DSDb's. The source data, that is being shared, is retrieved by the DSApp from "local" databases like the Asset Management Database and other local databases that hold data relevant to the specific data sharing connection. Relevant data is any data that the source data provider believes will benefit its interest. The source data provider's supply chain management might be enhanced by this specific receiving party knowing the next destination of a vehicle delivering a cargo. That data retrieved by the DSApp from the source provider's shipping database is copied into the DSDb so that all Data Sharing communications is in a common format and protocol. Each DSApp manages the complexity of interfacing to disparate database types and formats, depending on ODBC(4) and JDBC(5) to ease those rigors. All of this is setup and controlled explicitly or through dynamic AI rules by the Data Sharing Network Administrator.

Think about a Produce Market managing its inventory. The Produce Manager places an Order for chilled produce with a local provider that provides produce to the market on a regular basis. The Produce Market and the Produce Provider both have Data Sharing Networks and have shared supply chain data previously.

The Produce Market's Order Entry System triggers the Produce Market's DSApp to establish a Data Sharing Network secure connection with the Produce Provider's Data Sharing Network. Both companies will be able to monitor the Produce Provider's delivery vehicle's progress and its cargo's ambient environment throughout its journey, cargo area temperature and humidity, and the vehicle's on-time arrival at the Produce Market's Receiving Dock. Upon the cargo's delivery, both the Produce Market and the Produce Provider's driver execute an Exchange Verification, both company's Asset Management Systems comparing what was delivered, to what was received. When the EV compares, an EV Blockchain-of-Custody entry is made in both company's Asset Management System recording the successful change-of-custody.

In the big picture, the 90/10 rule applies. The 90/10 rule holds that many times 90% of a task can be achieved with 10% of the effort required to complete the full task and the last 10% will require 90% of total effort to complete. Most companies and supply chain stakeholders will get all the data they need just by sharing the data they have been collecting for years. Data like: when the factory or producer ships a product, the products condition as it travels across its journey, when that product arrives at its first stop, the shipping depot, when it leaves the depot in a container, the container's ID, when it was loaded onto a vessel, when it arrives at its destination port and when it clears customs, when it leaves the port for another transportation depot, when it arrives at that transportation depot and leaves again for delivery to its final destination – all this just by sharing data that is already readily available across the global supply chain. Powerful!

(1) A VPN or Virtual Private Network is a method used to add security and privacy to private and public networks, like the Internet. VPNs are most often used by corporations to protect sensitive data. However, using a personal VPN is increasingly becoming more popular as more interactions that were previously face-to-face transition to the Internet. Privacy is increased with a VPN because the user's initial IP address is replaced with one from the VPN provider. This method allows subscribers to attain an IP address from any gateway city the VPN service provides. https://www.whatismyip.com/what-is-a-vpn/

(2) Public-key encryption is a cryptographic system that uses two keys -- a public key known to everyone and a private or secret key known only to the recipient of the message. An important element to the public key system is that the public and private keys are related in such a way that only the public key can be used to encrypt messages and only the corresponding private key can be used to decrypt them. Moreover, it is virtually impossible to deduce the private key if you know the public key. http://www.webopedia.com/TERM/P/public_key_cryptography.html

(3) Artificial Intelligence is the theory and development of computer systems able to perform tasks that normally require human intelligence, such as visual perception, speech recognition, decision-making, and translation between languages.

(4) Open DataBase Connectivity, a standard database access method developed by the SQL Access group in 1992. The goal of ODBC is to make it possible to access any data from any application, regardless of which database management system (DBMS) is handling the data. http://www.webopedia.com/TERM/O/ODBC.html

(5) Short for Java Database Connectivity, a Java API that enables Java programs to execute SQL statements. This allows Java programs to interact with any SQL-compliant database. Since nearly all relational database management systems (DBMSs) support SQL, and because Java itself runs on most platforms, JDBC makes it possible to write a single database application that can run on different platforms and interact with different DBMSs. JDBC is similar to ODBC, but is designed specifically for Java programs, whereas ODBC is language-independent. http://www.webopedia.com/TERM/J/JDBC.html

(*) All company names are trademarks™ or registered® trademarks of their respective holders. Use of them does not imply any affiliation with or endorsement by them.